Welcome to the preliminary findings of the 2026 North American Prospect Development Benchmark Study, a groundbreaking effort to establish the first comprehensive, data-driven benchmarks for prospect development operations across the continent.

This report represents an exciting work in progress, with fielding still underway and insights continuing to evolve.

With data from 220 participating organizations, this study offers an early glimpse into the practices, investments, and outcomes shaping the field of prospect development. From staffing and productivity benchmarks to the relationship between operational maturity and revenue influence, these findings are designed to inform and empower both practitioners and leadership.

Stay tuned as we refine and expand this benchmark, and thank you for being part of this important journey!

Looking for more on how to use these benchmarks?

Get essential insights to help you advocate or prioritize improvements and reframe budget requests!

Findings overview

This section highlights the key findings from the 2026 North American Prospect Development Benchmark Study, crafted to provide immediate value for senior leadership and board-level discussions. Among the 220 participating organizations, the study reveals a field that is meaningfully invested in research operations but still developing the pipeline discipline needed to connect research effort to fundraising outcomes.

Research investment at a glance

To give you the clearest picture of the current landscape, all figures are presented below as medians with interquartile ranges (IQR). Think of these numbers as a powerful compass rather than a strict map. Use these as benchmarks—not prescriptions—for your own shop. Among participating organizations, the median research staffing is 1.5 FTE , with a median annual research spend of $112,450. Tool and technology expenditures sit at a median of $17,450. These figures reflect substantial variation across the sample, with smaller organizations often operating with fractional research staff and limited budgets while larger institutions maintain multi-person teams with six-figure technology stacks.

The median organization researches 175 prospects annually and qualifies 75 of those, yielding a median cost per qualified prospect of $500. These productivity benchmarks offer practitioners a baseline for evaluating their own operations against the broader field.

Note: a glossary of terms can be found here.

| Metric | Median | IQR |

| Research FTE (Median) | 1.5 | [0.2, 3.0] |

| Research spend | $112,450 | [$12,500, $224,950] |

| Tool spend | $17,450 | [$5,000, $37,450] |

| Prospects resarched | $175 | [50, 750] |

| Prospects qualified | $75 | [25, 300] |

| Cost per qualified | $500 | [$500, $1,500] |

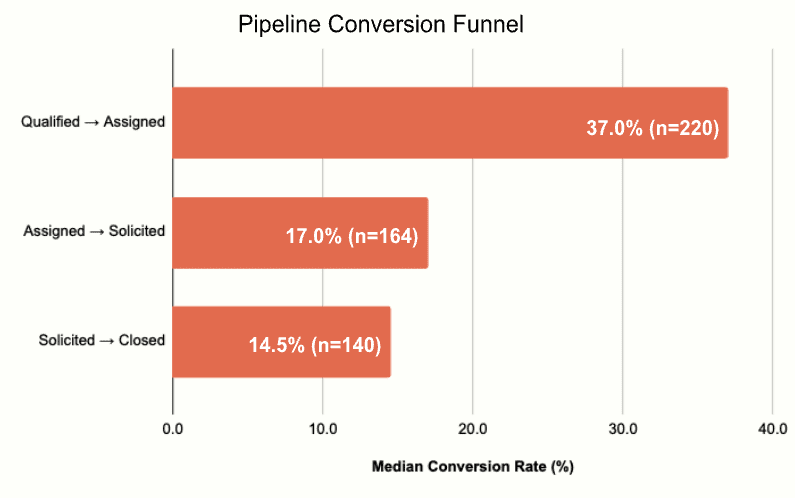

The pipeline conversion funnel

The conversion funnel reveals where prospect research connects to fundraising action. Among respondents who track these metrics, the median rates are: Qualified-to-Assigned at 37% (n=220), Assigned-to-Solicited at 17% (n=164), and Solicited-to-Closed at 14.5% (n=140). The declining sample sizes at each stage are themselves a finding: 25% of respondents do not track solicitation rates, and 36% do not track close rates, suggesting that many organizations lack visibility into the downstream impact of their research. If your organization doesn’t track solicitation or close rates, you’re not alone—but you’re also flying blind on whether your research is translating into asks and gifts.

For example: for a median organization with a 75-person portfolio:

~28 are assigned to an MGO

~13 were solicited

~11 had a gift close

Maturity at a glance

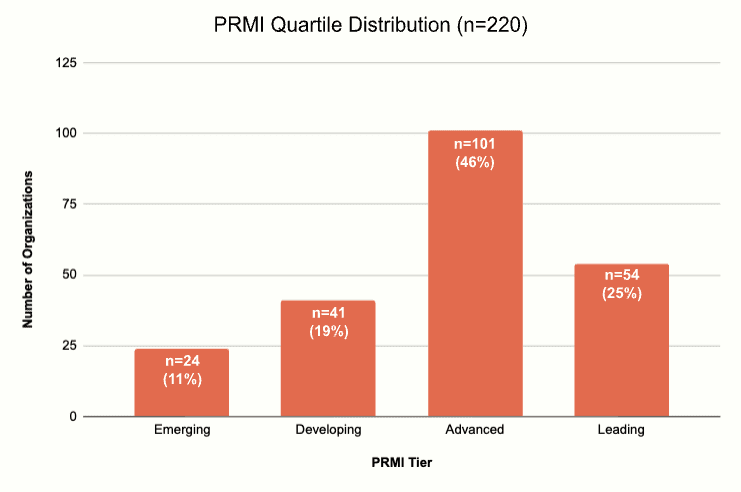

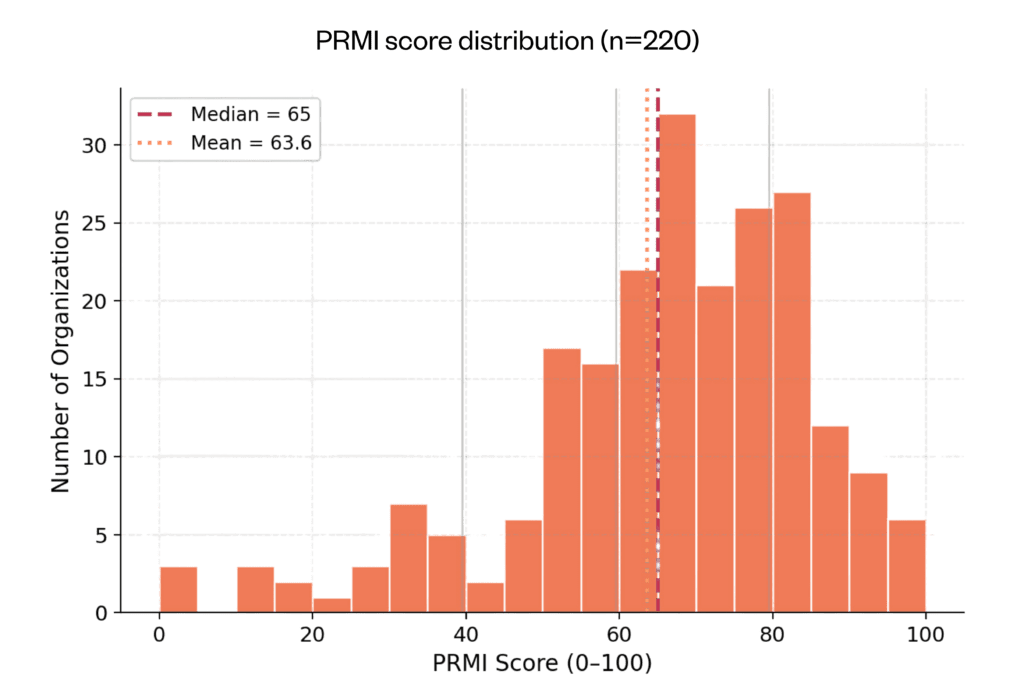

The Prospect Research Maturity Index (PRMI) provides a composite measure of operational maturity based on five self-assessed practices: CRM logging, gift officer action on research, pipeline tracking, portfolio hygiene, and ability to demonstrate ROI. Among all 220 respondents, the median PRMI score is 65 (IQR: 55–75) on a 0–100 scale. The largest single group (46%) falls in the Advanced tier (scores 60–79), while 25% reach Leading (80–100). A combined 30% remain in the Emerging (11%) or Developing (19%) tiers, indicating that nearly a third of organizations have significant room for maturity improvement.

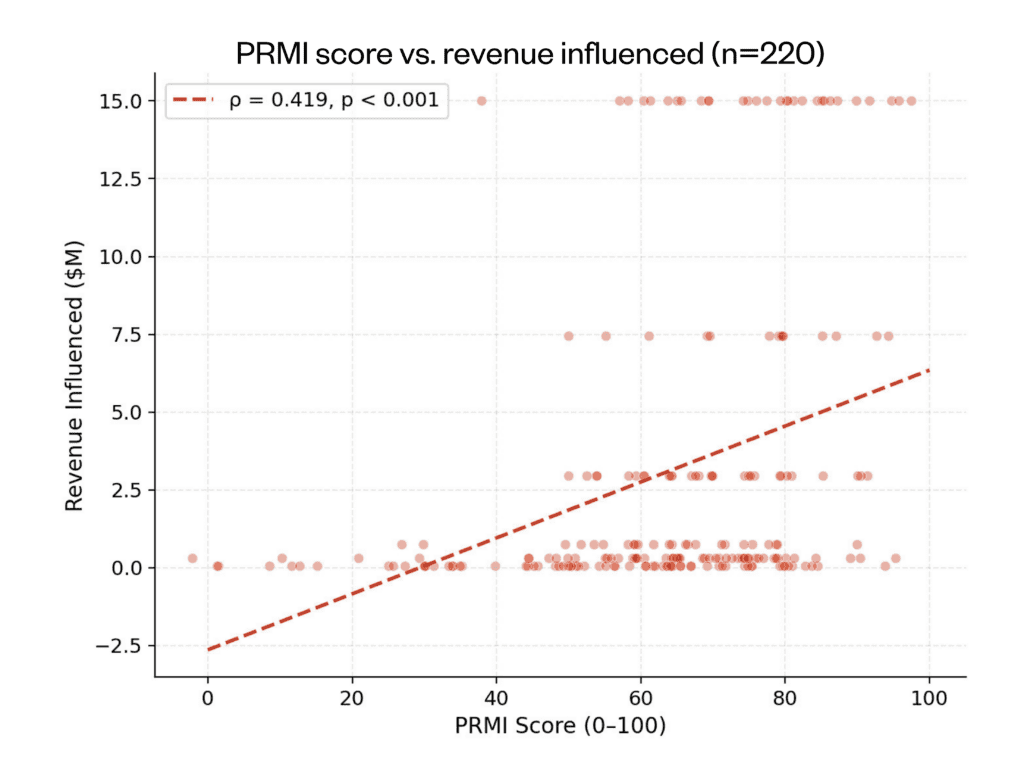

The strongest signal: maturity and revenue

The most robust finding in this study is the relationship between organizational maturity and revenue influenced by research. Among all 220 respondents, PRMI score correlates with self-reported revenue influenced at ρ = 0.419 (p < 0.001)—the strongest correlation observed in the study. This association holds after controlling for organizational revenue (ρ = 0.278, p < 0.001) and strengthens among respondents with higher data confidence (ρ = 0.408, p < 0.001, n=217). These results suggest that organizations reporting higher maturity practices also report greater revenue attributed to research, though the cross-sectional design means we cannot establish causation.

Want to see where your organization sits on the The Prospect Research Maturity Index?

Operational performance benchmarks

This section provides the operational benchmarks that practitioners can use to evaluate their team’s capacity, productivity, and cost efficiency against the broader field.

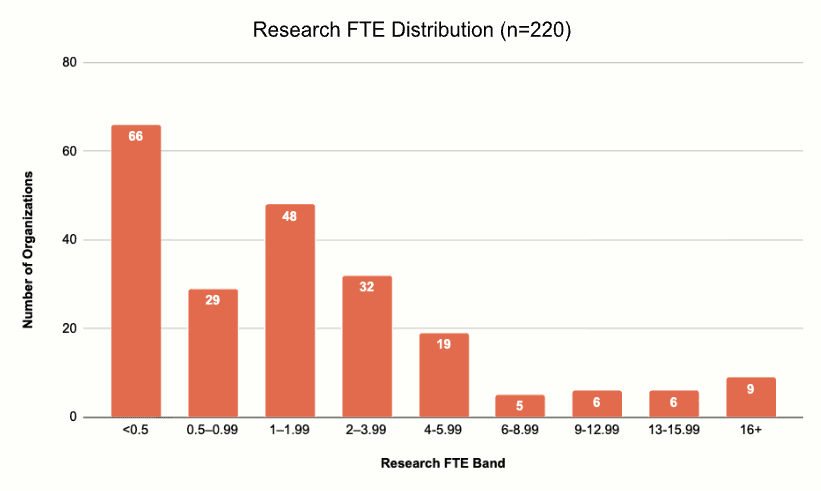

Research staffing

Research FTE allocation varies dramatically across the sample. The median of 1.5 FTE masks a distribution that ranges from organizations with less than half an FTE dedicated to research (often a shared function) to institutions with 20 or more full-time research professionals. The interquartile range of 0.2–3.0 FTE captures the core of the field, while the long right tail reflects large university advancement offices and healthcare systems that have invested in dedicated research teams.

Staffing the machine: Research FTE, Gift Officer FTE, and the ratio between them

One of the most practical questions a prospect development leader can bring to a budget conversation is: how many staff should we have for our number of gift officers? The data from 220 organizations provides the clearest answer the field has had to date, and it is not the answer most people expect.

The core finding

Research FTE and MGO FTE are strongly correlated at ρ = 0.829 (p < 0.001, n = 220). This is one of the strongest associations in the entire dataset, second only to total advancement staff and MGO count. Research teams and frontline fundraising teams scale together in near-lockstep.

The median ratio across all 220 organizations is one research FTE for every five major gift officers (0.19). The interquartile range runs from 0.17 to 0.38, meaning the middle half of organizations staff somewhere between one researcher for every six MGOs and one researcher for every three.

That ratio is not evenly distributed. It shifts meaningfully with organizational maturity.

How the ratio changes with maturity

Among Emerging-tier organizations (PRMI 0–25, n = 24), the median ratio is 0.17 — one researcher for roughly every six MGOs. In practice, most of these organizations have less than half a research FTE and one to two gift officers, so “ratio” overstates the formality of the arrangement. Many of these shops have no dedicated researcher at all; someone on the development team does lookups when time permits.

Developing-tier organizations (PRMI 26–50, n = 41) show the same 0.17 ratio but with slightly more headcount on both sides: a median 0.75 research FTE supporting a median 1.5 MGOs. The research function exists but is typically a fraction of someone’s job.

Advanced-tier organizations (PRMI 51–75, n = 101) edge up to 0.19 — a median of 1.5 research FTE supporting 4.0 MGOs. This is the tier where most organizations have at least one dedicated researcher, and the largest segment of the dataset sits here.

Leading-tier organizations (PRMI 76–100, n = 54) are where the ratio shifts noticeably: 0.38, or roughly one researcher for every 2.6 gift officers. The median research team is 3.0 FTE supporting 8.0 MGOs. These organizations invest nearly twice the research-to-MGO ratio of the rest of the field.

The ratio itself is positively correlated with PRMI (ρ = 0.290, p < 0.001) — organizations that devote proportionally more research capacity relative to their frontline are measurably more mature in their operations. It is also associated with more qualified prospects entering the pipeline (ρ = 0.286, p < 0.001) and modestly with higher total revenue (ρ = 0.216, p = 0.001).

What the MGO bands actually look like

The scaling relationship is not linear. Research capacity grows in steps, and the data shows where those steps tend to fall:

| MGO band | Median Research FTE | Typical Ratio | n |

| 1-2 MGOs | 0.25 | 1:6 | 89 |

| 3-5 MGOs | 1.50 | 1:3 | 34 |

| 6-10 MGOs | 1.50 | 1:5 | 33 |

| 11-20 MGOs | 3.00 | 1:5 | 22 |

| 21+ MGOs | 7.5 | 1.4 | 42 |

The jump from 1–2 MGOs to 3–5 MGOs is where organizations typically add their first full-time researcher. Below that threshold, research is usually a partial responsibility. Above it, the ratio stabilizes in the 1:3 to 1:5 range, suggesting that once a research function exists, it scales at a fairly consistent rate with the frontline.

The 89 organizations with only 1–2 MGOs represent 40% of the sample. Their median research allocation of 0.25 FTE — a quarter of someone’s time — is the single most common staffing configuration in the dataset.

The understaffed outlier

Seven organizations in the sample reported 8 or more MGOs but less than 1.0 research FTE. Their median PRMI score is 60 — solidly Advanced, but notably lower than the 80 scored by Leading-tier organizations with similar MGO counts and properly staffed research teams. These are organizations large enough to deploy a meaningful frontline but not yet investing in the research infrastructure to support it. They are, in a sense, building a house without a foundation.

The small-shop reality

Fifty-nine organizations — 27% of the sample — have less than half a research FTE and only one to two gift officers. Their median PRMI is 55. Two-thirds are independent nonprofits. Nearly all have total revenue under $5 million.

The finding that matters for these organizations is not the 1:5 ratio — it’s the behavioral correlations. Lookup frequency is associated with PRMI at ρ = 0.390, stronger than the correlation between research FTE and PRMI (0.384). In other words, how often you look matters at least as much as how many people you have looking. A half-time researcher who checks every donor before every meeting may contribute more to operational maturity than a full-time researcher who produces quarterly profiles that sit in a shared drive.

The ratio does not predict efficiency

One of the more interesting null findings: the research-to-MGO ratio is not significantly correlated with cost per qualified prospect (ρ = 0.053, p = 0.43). Adding proportionally more researchers does not appear to lower — or raise — the unit cost of producing a qualified prospect. The implication is that the ratio affects throughput and maturity but not unit economics. A 1:3 ratio and a 1:6 ratio produce qualified prospects at roughly the same cost per head; the 1:3 shop simply produces more of them and operates more systematically.

This is consistent with the broader null finding that cost per qualified prospect is uncorrelated with PRMI (ρ = 0.026), revenue (ρ = 0.046), or almost anything else in the dataset. Unit cost appears to be driven by local factors — salary markets, tool choices, prospect complexity — rather than structural staffing decisions.

What this means for practitioners

If you have 3–5 MGOs and no dedicated researcher, you are below the field median. The data suggest that the transition from fractional research capacity to at least one full-time FTE is associated with measurable gains in maturity and pipeline output. This is the strongest single-hire case in the dataset.

If you have 6+ MGOs, the benchmark ratio is 1:3 to 1:5. If you are below 1:5, your research team is likely a bottleneck — not because the individuals are underperforming, but because the volume of prospects flowing through the pipeline exceeds what the available research capacity can meaningfully touch.

If you are a one-person shop, the ratio is less relevant than the habit. The organizations that score highest on PRMI among small shops are not the ones with the most research FTE — they are the ones that look up donors most frequently, log findings in the CRM, and act on what they find. Process discipline substitutes for headcount at the bottom of the staffing curve.

Caveats

The 1:5 median ratio describes this sample of 220 self-selected organizations, not a universal standard. Organizations with unusual prospect profiles (very high net worth, very long cultivation cycles, heavy planned giving) may reasonably staff differently. The ratio also does not account for the type of research — a team producing detailed major gift profiles operates at a different ratio than a team focused on batch screening and data hygiene. Finally, because both FTE measures were collected in bands, the ratio is an approximation. The true median could reasonably fall between 1:4 and 1:6.

Productivity: Prospects per FTE

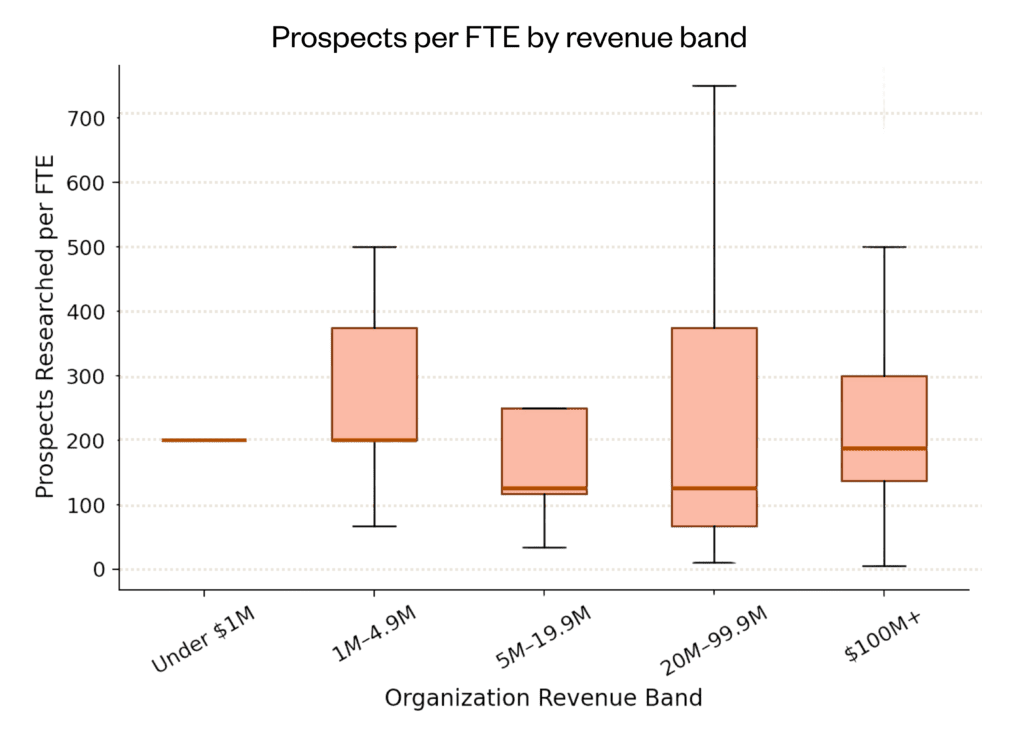

Among all participating organizations, the median number of prospects researched per FTE is 200 (IQR: 117–250). This metric varies meaningfully by organization size. Smaller organizations tend to report higher prospects-per-FTE ratios—not necessarily because they are more efficient, but because fractional-FTE research functions often handle a mix of quick lookups and research deliverables such as deep profiles. Larger organizations with dedicated teams tend to produce fewer but deeper research products per FTE.

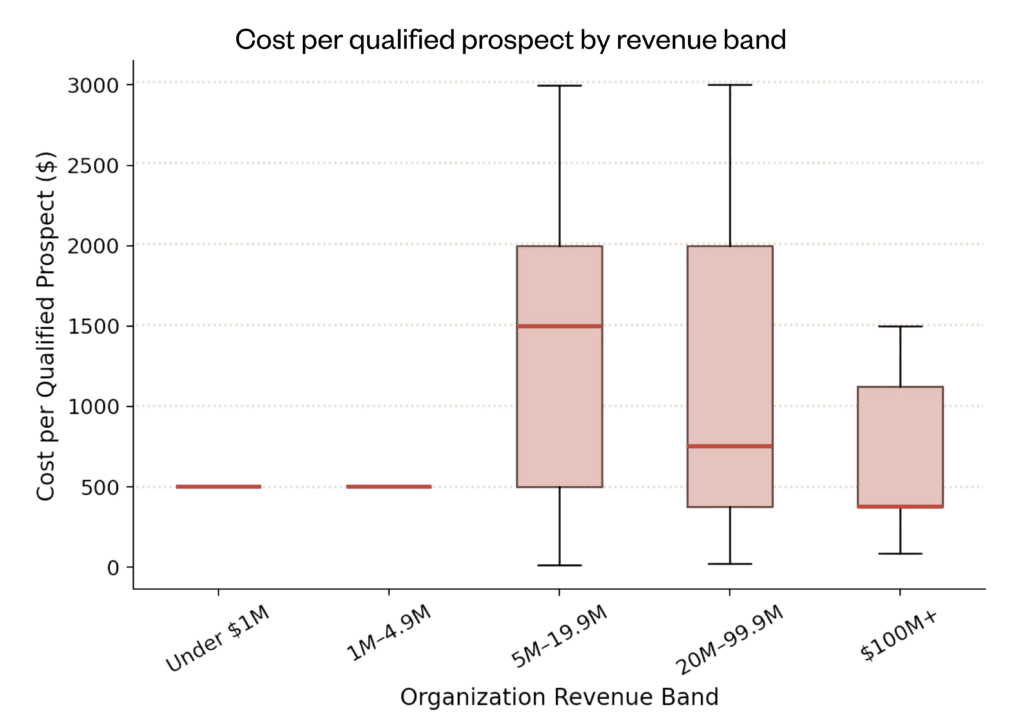

Cost efficiency: cost per qualified prospect

The median cost per qualified prospect is $500 (IQR: $500–$1,500). This figure is derived by dividing the midpoint of each respondent’s research spend band by the midpoint of their qualified prospects band. As a band-based estimate, this metric should be interpreted as an order-of-magnitude benchmark rather than a precise figure. The relatively tight clustering around $500 suggests a floor effect from the band structure, while the upper IQR of $1,500 reflects organizations that either spend more on research or qualify fewer prospects.

A null result: Spend does not predict productivity

One of the study’s most important findings is what it did not find. Research spend showed no statistically significant correlation with productivity per FTE (ρ = −0.058, p = 0.389, n=220). This null result, designated Core Test 4, suggests that spending more does not automatically translate to producing more research per staff member. The implication for practitioners is significant: budget advocacy should emphasize what the investment enables (pipeline quality, revenue influence, maturity practices) rather than a simple input-output productivity argument.

Portfolio health benchmarks

Portfolio health indicators reveal how effectively organizations manage their prospect pipelines after research has been completed. These metrics speak directly to the handoff between research and frontline fundraising.

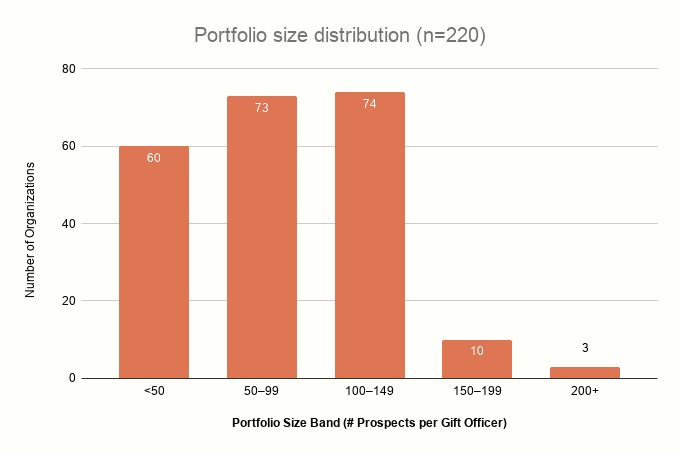

Portfolio Size

The median portfolio size among respondents is 75 prospects (IQR: 25–125). This refers to the number of prospects actively assigned to gift officers at a given time. The distribution skews toward smaller portfolios, with a substantial proportion of organizations maintaining portfolios under 50 prospects.

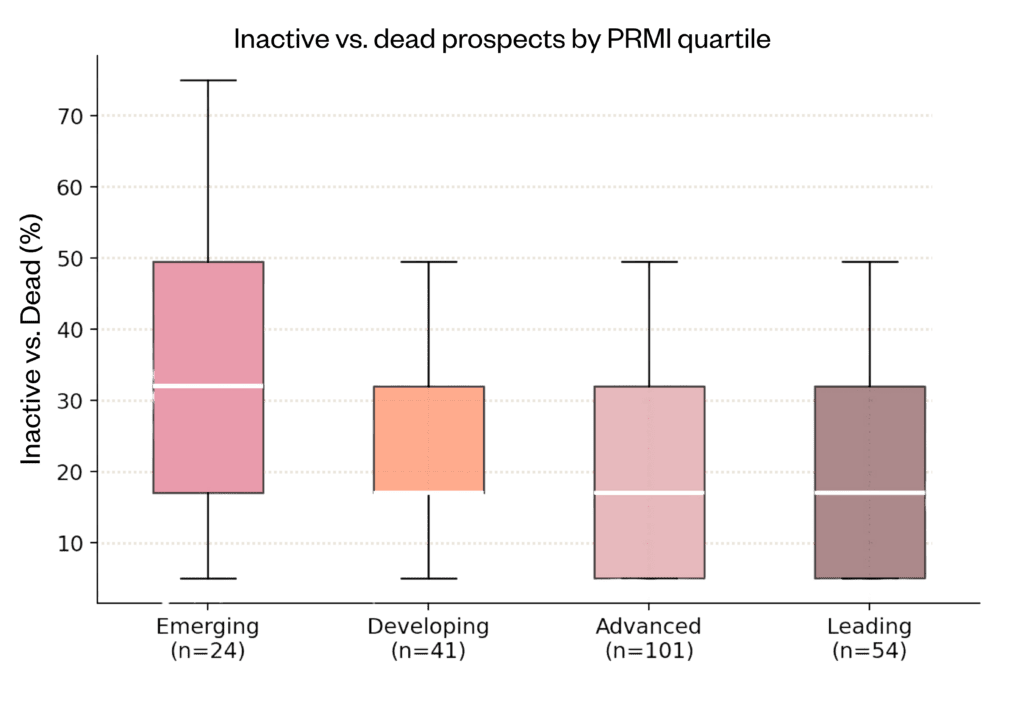

Inactive prospects and maturity

The proportion of inactive or stalled prospects in the pipeline provides a signal of portfolio hygiene. Across the full sample, the median inactive prospect percentage is 17% (IQR: 5–32%). When examined by PRMI quartile, a pattern emerges: organizations in the Emerging tier report higher inactive prospect rates, while those in the Leading tier report lower rates. This relationship is statistically significant but modest (ρ = −0.148, p = 0.029), consistent with the intuition that more mature organizations are better at pruning unproductive prospects from their pipelines.

Assignment rates and maturity

The percentage of qualified prospects that are assigned to gift officers correlates meaningfully with maturity. Among all respondents, PRMI and assignment rate show ρ = 0.312 (p < 0.001), designated Core Test 1. This finding suggests that more mature organizations are not only better at research—they are better at ensuring research gets used. The assignment rate may be the most actionable pipeline metric for organizations seeking to improve their research ROI: if qualified prospects are not being assigned, the research investment is stranded.

Screening & discipline benchmarks

Screening and lookup practices reflect the operational discipline of the research function. This section examines how frequently organizations conduct batch screening reviews and individual prospect lookups.

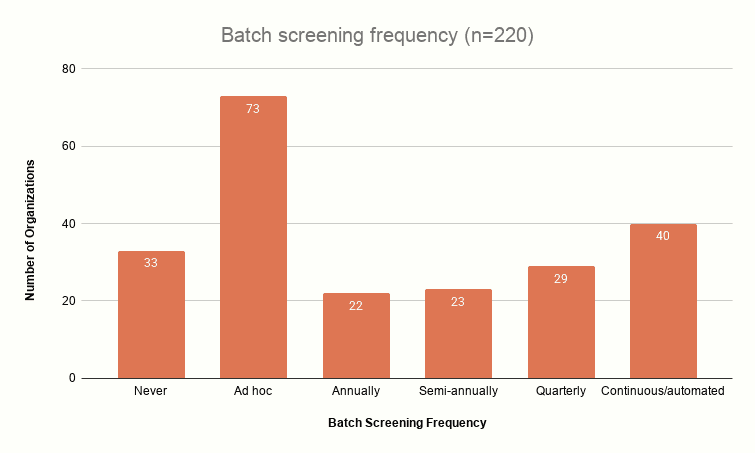

Batch screening frequency

Batch screening—the systematic review of prospect pools using wealth screening tools or other data sources—varies considerably. The most common response is ad hoc, though a substantial minority operate on an automated basis. Regular screening frequency correlates with the number of prospects qualified (ρ = 0.328, p < 0.001, Core Test 3), one of the stronger associations in the study. Organizations that screen more regularly identify more qualified prospects, which in turn feeds the conversion pipeline.

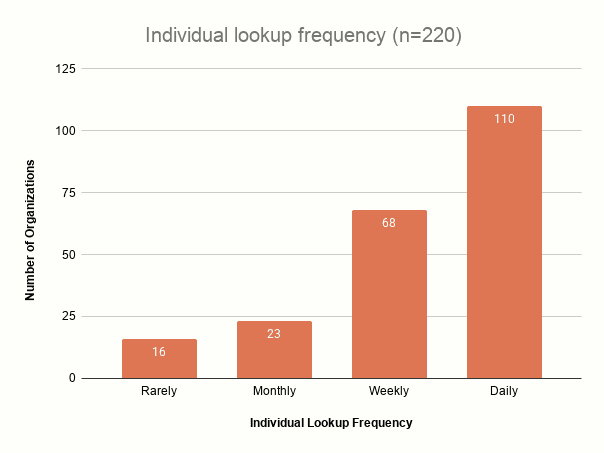

Individual lookup frequency

Individual prospect lookups—on-demand research typically triggered by gift officer requests or event preparation—are more uniformly distributed. Daily lookups are common among organizations with dedicated research staff, while less-resourced organizations tend toward weekly or monthly rhythms.

Maturity index findings

The Prospect Research Maturity Index (PRMI) is a composite measure computed from five self-assessed Likert-scale items (1–5 each). The raw sum (range 5–25) is normalized to a 0–100 scale and classified into four tiers: Emerging (0–39), Developing (40–59), Advanced (60–79), and Leading (80–100).

A note on the PRMI: This is an exploratory index based on five self-assessed items. Its internal reliability (Cronbach’s α = 0.733) is below the conventional 0.75 threshold. It’s a useful directional tool, not a diagnostic instrument. We will refine it in future administrations.

Score distribution

The PRMI distribution is left-skewed, with a mean of 63.6 and a median of 65. The majority of organizations (46%) fall in the Advanced tier, with the next-largest group in the Leading tier (25%). The concentration in the Advanced range suggests that the median participating organization has adopted most maturity practices to some degree but has not yet achieved the consistent, measurable integration reflected in Leading scores.

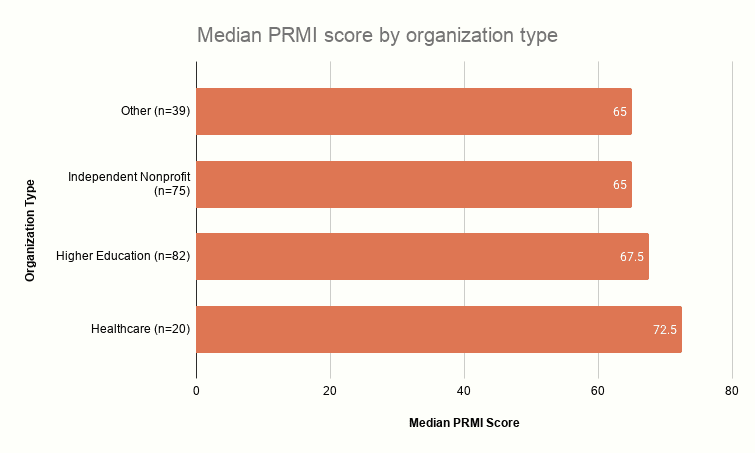

Maturity by organization type

Healthcare organizations report the highest median PRMI score (72.5, n=20), followed by Higher Education (67.5, n=82), Independent Nonprofits (65.0, n=75), and Other organization types (65.0, n=39). The Community Foundation category (n=4) is suppressed due to insufficient sample size. The healthcare finding, while based on a smaller sample, aligns with the sector’s emphasis on data-driven donor management and compliance documentation.

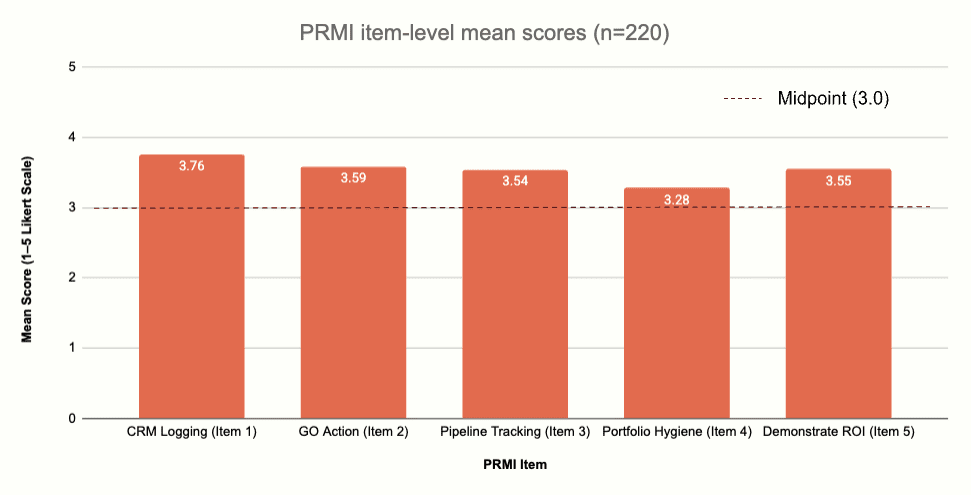

Item-level analysis

Examining the five PRMI items individually reveals that CRM logging (mean 3.76) is the highest-scoring practice, while portfolio hygiene (mean 3.28) is the lowest. This pattern makes intuitive sense: logging research in a CRM is a relatively straightforward procedural step, while maintaining active portfolio hygiene—regularly reviewing, reassigning, and removing prospects—requires ongoing organizational discipline. Demonstrating ROI (mean 3.55) and pipeline tracking (mean 3.54) fall in the middle, suggesting moderate adoption of these more advanced practices.

Revenue influence

This section explores the direct financial outcomes reported by participating organizations, focusing on the revenue that research operations influence. The primary and most robust finding thus far is a strong, statistically significant correlation between an organization’s Prospect Research Maturity Index (PRMI) score and its self-reported philanthropic revenue influenced by research. Despite the enormous variation in self-reported influenced revenue, the link between operational maturity and financial outcomes persists even when controlling for organizational revenue. Furthermore, we examine how maturity directly relates to an organization’s ability to track key pipeline outcomes, revealing that many organizations cannot improve what they do not measure.

A note on framing influence: All revenue-related findings in this section are based on self-reported estimates using banded response options (e.g., “$1M–$4.9M”). We model these using midpoint values, which introduces band-estimation noise. All correlations reflect associations among participating organizations, not causal relationships, and should not be extrapolated to the broader population.

Want to see where your organization sits on the The Prospect Research Maturity Index?

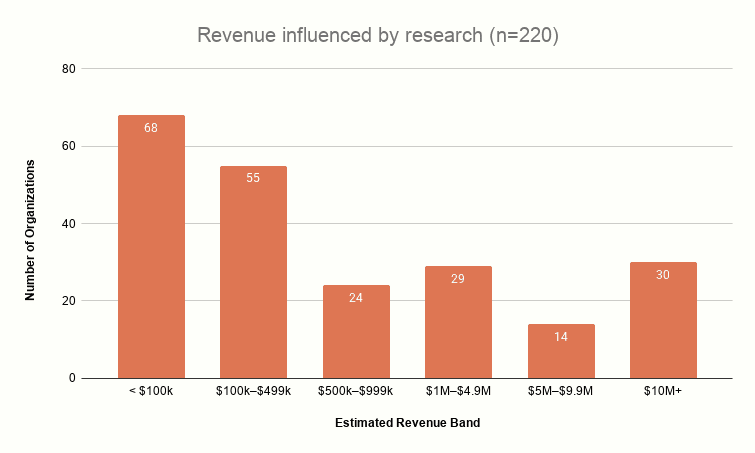

Revenue influenced by research

Among all 220 respondents, self-reported revenue influenced by research spans the full range of response bands. The median falls at $299,500 (IQR: $50,000–$2,950,000), reflecting enormous variation driven by both organization size and the maturity of revenue-attribution practices. The wide IQR underscores that “revenue influenced” means very different things across the sample—from small organizations attributing under $100,000 to large institutions reporting $10 million or more.

PRMI and revenue influence

The strongest statistical signal in this study links maturity to revenue. PRMI score correlates with revenue influenced at ρ = 0.419 (p < 0.001, n=220), and this relationship survives partial correlation controlling for organizational revenue (ρ = 0.278, p < 0.001). Among respondents reporting higher data confidence (Very or Moderately confident, n=217), the association remains robust at ρ = 0.408 (p < 0.001).

The practical interpretation: among participating organizations, those reporting more mature research practices also report influencing more philanthropic revenue. While we cannot determine whether maturity causes higher revenue influence (or whether organizations that raise more simply have the resources to adopt mature practices), the consistency of this signal across subsamples suggests a real underlying association worth further investigation.

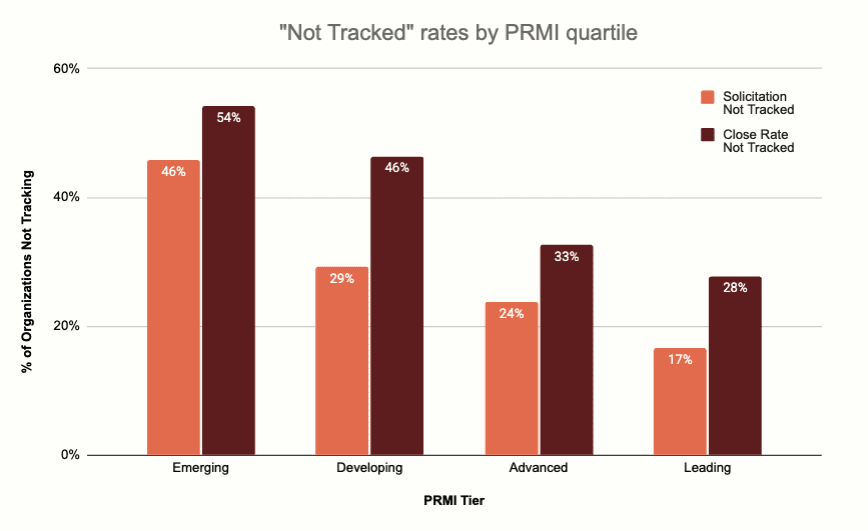

“Not Tracked” as a maturity signal

One of the more revealing findings is the relationship between maturity and data visibility. Among Emerging organizations, 46% do not track solicitation rates and 54% do not track close rates. These percentages drop steadily across the maturity spectrum: Leading organizations show 17% and 28% not-tracked rates, respectively. The ability to track pipeline outcomes is itself a component of maturity—organizations cannot improve what they do not measure.

Help us build the benchmark you deserve!

This study is designed to be replicated annually. Your participation this year directly shapes the benchmarks that the entire profession will reference. The next time you need to justify a hire, defend a budget, or explain the value of research to a board—these are the numbers you’ll reach for. Make sure they include your voice.

Every additional response makes the benchmarks more reliable, the subgroup analyses more robust, and the findings more defensible when you take them to your leadership.

Additional correlations: Annotations and monitoring status

The full correlation analysis produced 38 pairwise Spearman correlations across the n = 220 dataset. Beyond those reported earlier, the remaining correlations fall into two categories: findings that confirm what the field would expect and findings that warrant continued monitoring as the dataset grows.

Noted—Not surprised

These correlations confirm structural relationships that any experienced prospect development professional would predict. They validate the dataset’s internal consistency more than they reveal new insights.

Total Staff FTE ↔ MGO FTE (ρ = +0.880, p < 0.001, n = 220): Larger advancement shops employ more gift officers. This is definitional. Noted as a dataset validity check.

Total Staff FTE ↔ Research FTE (ρ = +0.834, p < 0.001, n = 220): Same logic. Research headcount scales with overall department size.

MGO FTE ↔ Total Revenue (ρ = +0.814, p < 0.001, n = 220): Organizations with more frontline fundraisers report more total revenue. The causal direction is ambiguous and likely bidirectional — larger revenue justifies more MGOs, and more MGOs produce more revenue.

MGO FTE ↔ MG Revenue (ρ = +0.809, p < 0.001, n = 220): Same as above, specific to major gift revenue.

Research FTE ↔ Total Revenue (ρ = +0.773, p < 0.001, n = 220): Bigger organizations invest more in research.

Research Spend ↔ Total Revenue (ρ = +0.763, p < 0.001, n = 220): Budget scales with organizational size.

MGO FTE ↔ Research Spend (ρ = +0.761, p < 0.001, n = 220): Organizations that invest in frontline fundraising also invest in the research that supports it. The two budget lines co-scale.

Research FTE ↔ MG Revenue (ρ = +0.760, p < 0.001, n = 220): More researchers in organizations raising more in major gifts. Size-driven.

Research Spend ↔ MG Revenue (ρ = +0.740, p < 0.001, n = 220): Same pattern for budget rather than headcount.

Research FTE ↔ Prospects Qualified (ρ = +0.737, p < 0.001, n = 220): More researchers produce more qualified prospects. Expected, though the strength of the association (strong, not just moderate) is worth noting — it suggests research staffing is genuinely the binding constraint on pipeline input for most organizations.

Research Spend ↔ Prospects Qualified (ρ = +0.729, p < 0.001, n = 220): The budget version of the same finding.

Tool Spend ↔ Total Revenue (ρ = +0.721, p < 0.001, n = 220): Larger organizations spend more on research tools.

Research FTE ↔ Tool Spend (ρ = +0.703, p < 0.001, n = 220): Organizations with more researchers buy more tools. These are complementary inputs, not substitutes.

Tool Spend ↔ Prospects Qualified (ρ = +0.663, p < 0.001, n = 220): Higher tool investment is associated with more qualified output. Though we cannot separate this from the size effect— larger organizations both spend more and qualify more.

MG Threshold ↔ Revenue (ρ = +0.655, p < 0.001, n = 220): Larger organizations set higher major gift floors. A $100M+ university defining “major” at $100k and a $2M nonprofit defining it at $1k are both behaving rationally.

Research FTE ↔ Prospects Researched (ρ = +0.649, p < 0.001, n = 220): More researchers research more prospects.

Portfolio Size ↔ Revenue (ρ = +0.535, p < 0.001, n = 220): Bigger organizations manage larger portfolios.

MGO FTE ↔ Portfolio Size (ρ = +0.411, p < 0.001, n = 220): More gift officers means a larger aggregate portfolio. Though the moderate (rather than strong) strength suggests wide variation in per-MGO caseloads across the sample.

Continuing to monitor

These correlations are either operationally interesting, behaviorally revealing, weaker than expected, or outright null in ways that challenge conventional assumptions. As the dataset grows beyond n = 220, we are watching to see whether these relationships strengthen, weaken, or resolve.

Lookup Frequency ↔ PRMI (ρ = +0.390, p < 0.001, n = 220): This is the strongest behavioral (non-size-driven) predictor of maturity in the dataset. Organizations where researchers look up donors more frequently—daily or weekly, rather than monthly or rarely — score meaningfully higher on PRMI. The finding is intriguing because lookup frequency is a habit, not a resource. It costs nothing to look up a donor before a meeting. We want to see whether this holds with a larger sample and whether it interacts with organization size. If it does, it may be the single most actionable recommendation in the study: look things up more often.

Research FTE ↔ PRMI (ρ = +0.384, p < 0.001, n = 220): Having more researchers is associated with higher maturity, but the correlation disappears when controlling for organization size (within the $20M–$99.9M band alone, ρ = −0.034, p = 0.79, n = 63). This suggests the relationship is confounded by scale — larger organizations have both more researchers and more mature operations for reasons that may be independent. We are monitoring to determine whether research FTE has an independent effect on maturity or whether it is primarily a proxy for organizational size.

MGO FTE ↔ PRMI (ρ = +0.326, p < 0.001, n = 220): Same pattern as above. More MGOs is associated with maturity, but likely reflects organizational scale rather than a direct causal path. Monitoring for the same size-confound question.

Pct Assigned ↔ PRMI (ρ = +0.312, p < 0.001, n = 220): Organizations with higher assignment rates score higher on maturity. This is a process discipline metric—assigning prospects to named gift officers is a deliberate management action, not a resource question. The correlation is moderate and survives intuition checks: you would expect organizations that bother to assign prospects to also log in the CRM and track solicitations. We prefer more data to determine whether assignment rate is predictive of maturity independent of organization size, or whether it is simply that larger, better-staffed shops assign more.

Batch Screening Frequency ↔ PRMI (ρ = +0.276, p < 0.001, n = 220): Organizations that screen more frequently score higher on maturity. Like lookup frequency, this is a behavioral choice, but it also correlates with budget (screening costs money). We are watching to see whether this is an independent practice effect or a spending proxy.

Pct Assigned ↔ Revenue (ρ = +0.238, p < 0.001, n = 220): Higher assignment rates are weakly but significantly associated with more revenue. The direction is promising—it suggests that the act of formally assigning prospects matters for outcomes—but the effect is modest and could be confounded by size. Needs a larger sample to draw actionable conclusions.

Portfolio Size ↔ PRMI (ρ = +0.223, p < 0.001, n = 220): Larger portfolios are associated with higher maturity. This could mean mature organizations cultivate more prospects, or simply that larger organizations (which tend to be more mature) have bigger portfolios. The direction of the effect is uncertain.

Inactive Prospect Rate ↔ PRMI (ρ = −0.148, p < 0.05, n = 220): This is the only negative correlation of note: organizations with higher maturity carry fewer inactive donors in their portfolios. The effect is weak but directionally meaningful and aligns with the PRMI portfolio hygiene item. We are monitoring to see whether this strengthens with a larger sample. If it does, it supports a concrete recommendation: prune your portfolio.

Research FTE-to-MGO Ratio ↔ PRMI (ρ = +0.290, p < 0.001, n = 220): Reported in detail in the staffing section above. Organizations that invest proportionally more research capacity relative to their frontline are more mature. The ratio is independent of absolute headcount, which makes it a more interesting signal than raw FTE counts. Monitoring closely.

Research FTE-to-MGO Ratio ↔ Revenue (ρ = +0.216, p = 0.001, n = 220): A higher research-to-MGO ratio is weakly associated with higher revenue. The effect is modest and entangled with org size. Needs more data.

Research FTE-to-MGO Ratio ↔ Prospects Qualified (ρ = +0.286, p < 0.001, n = 220): Organizations with proportionally more research capacity produce more qualified prospects per cycle. This is one of the more promising signals for a staffing-investment argument, because it speaks to pipeline throughput rather than just organizational size.

Ratio ↔ Pct Assigned (ρ = +0.144, p = 0.03, n = 220): Barely significant. A higher research-to-MGO ratio is weakly associated with higher assignment rates, which makes intuitive sense—more research capacity means more prospects are ready for assignment—but the effect is marginal. Needs a larger sample before drawing conclusions.

Time to Assign ↔ Revenue (ρ = +0.142, ns, n = 154): Not significant. Faster assignment does not appear to predict revenue in this sample. This is a null finding we are watching because the conventional wisdom (“speed to assignment matters”) would predict a relationship here. The reduced n (154, because 66 respondents selected “Not tracked”) may be suppressing a real signal, or the conventional wisdom may be wrong for this population. Needs more data.

Time to Assign ↔ PRMI (ρ = +0.033, ns, n = 154): Essentially zero. Speed of assignment is not associated with maturity. This is a surprising null—you might expect mature organizations to hand off prospects faster. One possible explanation is that mature organizations also conduct more thorough qualification, which takes longer, offsetting any efficiency gains. Another is that the “Not tracked” respondents are the least mature, and their exclusion from this analysis removes the low end of the distribution.

Null Findings—Noted and worth reporting

These are correlations that returned no significant result. In several cases, the absence of a relationship is the finding.

Research FTE ↔ Cost per Qualified (ρ = +0.108, ns, n = 220): Adding more researchers does not reduce the cost of producing a qualified prospect. This suggests constant returns to scale in research — each additional FTE produces proportionally more output at roughly the same unit cost, rather than driving efficiencies. Important for budget conversations: more researchers means more prospects, not cheaper prospects.

MGO FTE ↔ Cost per Qualified (ρ = +0.081, ns, n = 220): More gift officers do not lower the cost of research output either. The two functions scale independently on unit economics. Noted.

Qualified per FTE ↔ PRMI (ρ = −0.099, ns, n = 220): Maturity is not associated with individual researcher throughput. Mature organizations do not produce more qualified prospects per researcher—they produce more qualified prospects in total because they have more researchers. This is a meaningful distinction for anyone tempted to use PRMI as a productivity metric. Maturity measures how well the machine works, not how fast each person works.

Prospects per FTE ↔ PRMI (ρ = +0.045, ns, n = 220): Same finding for raw research volume per person. Throughput per FTE is not a maturity signal. Noted.

Cost per Qualified ↔ PRMI (ρ = +0.026, ns, n = 220) — This is the null finding we highlight most often: maturity has nothing to do with how much you spend per prospect. Organizations can be operationally mature at any cost point. This directly counters the objection “we can’t afford to be mature.” Maturity is about practices—logging, tracking, acting, pruning, demonstrating—not about dollars per qualified prospect.

Cost per Qualified ↔ Total Revenue (ρ = +0.046, ns, n = 220): Unit cost does not vary with organizational revenue. Small organizations and large organizations spend roughly similar amounts per qualified prospect. This suggests that cost per qualified is driven by local factors (salary markets, tool stack, prospect complexity) rather than organizational scale.

Effort per Qualified ↔ PRMI (ρ = +0.033, ns, n = 220): How many hours you spend per profile is unrelated to maturity. Mature organizations do not spend more (or less) time on each prospect—they simply handle the overall workflow more systematically.

Effort per Qualified ↔ Revenue (ρ = +0.072, ns, n = 220): Spending more hours per prospect does not predict higher revenue. This counters the “more thorough research yields more money” assumption, at least at the organizational level. Quality of research may matter, but hours invested per profile is not a reliable proxy for quality.

Qual Criteria Count ↔ Revenue (ρ = +0.026, ns, n = 220): Using more qualification criteria does not predict higher revenue. Whether you use one gate or four gates, it doesn’t appear to matter for outcomes. What likely matters is whether the criteria are consistently applied, not how many there are.

Qual Criteria Count ↔ PRMI (ρ = +0.103, ns, n = 220): Same null for maturity. Number of qualification criteria is not a maturity signal.

Research FTE-to-MGO Ratio ↔ Cost per Qualified (ρ = +0.053, ns, n = 220): Investing proportionally more in research relative to the frontline does not raise or lower unit costs. The ratio affects throughput and maturity, not economics. Noted and reported in the staffing section.

All correlations are Spearman rank-order (ρ), appropriate for ordinal band data. All significance thresholds are two-tailed. Correlations describe association among the 220 participating organizations. They do not establish causation and should not be projected onto the broader field without accounting for the convenience sample design.

Methodology + Advanced analytical findings

Study purpose

The 2026 North American Prospect Development Benchmark Study was designed to establish the first comprehensive, data-driven benchmarks for the prospect development profession. It aims to provide practitioners with defensible metrics for evaluating their operations, advocating for resources, and benchmarking against the field.

Study design

This is a cross-sectional, self-reported survey study. The instrument was distributed electronically to prospect development professionals across North America via a single web link collector (SurveyMonkey). The survey consisted of 44 questions spanning organizational demographics, research operations, pipeline metrics, maturity practices, and revenue influence.

Sample & data quality

A total of 233 responses were received. The following exclusion criteria were applied to produce the analytical sample of 220:

- Q1 Screening (“Are you the best person to answer?”): 11 respondents answered “No” and were excluded.

- Q2 Screening (“Does your organization have a prospect research or prospect management function?”): 1 respondent answered “No” and was excluded.

- Duplicate response: 1 duplicate respondent identified by matching email address was excluded (later submission retained).

The SurveyMonkey quality classifier flagged 30 responses for speeding (completing the survey faster than expected). These were retained after review, as the speed flag alone did not indicate invalid data—respondents familiar with their metrics could reasonably complete the survey quickly.

Prospect Research Maturity Index (PRMI)

The PRMI is an exploratory composite index based on five Likert-scale items (1–5 each): CRM logging, gift officer action on research, pipeline tracking, portfolio hygiene, and ability to demonstrate ROI. The raw sum (range 5–25) is normalized to a 0–100 scale. The instrument achieves a Cronbach’s alpha of 0.733, below the conventional 0.75 threshold for acceptable reliability. The original study design specified eight items; only five were fielded, which may contribute to the lower-than-expected reliability. The PRMI should therefore be treated as an exploratory tool rather than a validated psychometric instrument.

Statistical approach

All correlation analyses use Spearman’s rank correlation (ρ), chosen because the survey data is predominantly ordinal (banded response options). Partial correlations control for organizational revenue as a potential confound. The significance threshold is α = 0.05 for all tests. Revenue modeling uses band midpoints with the understanding that this introduces estimation noise. All analyses are conducted on the full analytical sample (n=220) unless otherwise noted; reduced sample sizes for pipeline metrics reflect “Not tracked” responses, which are excluded from correlation analyses but reported as a separate finding.

Limitations

- Self-reported data: All metrics are self-reported by survey respondents and subject to recall bias and estimation error.

- Convenience sample: The sample is not randomly drawn and may not represent the full population of prospect development operations in North America.

- Banded responses: Revenue and operational metrics use categorical bands; midpoint modeling introduces systematic estimation noise.

- Cross-sectional design: The study cannot establish causal relationships. All findings are correlational.

- PRMI reliability: Cronbach’s alpha of 0.733 is below the 0.75 threshold; the index is exploratory, not validated.

- Community Foundation suppression: n=4 is too small for reliable subgroup analysis.

Replication commitment

This study is designed to be replicated annually. The analytical code, variable definitions, and exclusion criteria are fully documented. Future administrations should aim to increase the sample size, refine the PRMI with additional items to improve reliability, and introduce longitudinal tracking to enable trend analysis.

Advanced analytical findings

This section presents the five core analytical tests specified in the study design, along with partial correlation results that control for organizational revenue as a potential confound.

Core Correlation Tests

| Test | Hypothesis | ρ | p-value | n | Interpretation |

| Test 1 | PRMI → Assignment Rate | 0.312 | < 0.001 | 220 | Significant |

| Test 2 | PRMI → Inactive Prospect % | −0.148 | 0.029 | 220 | Significant (weak) |

| Test 3 | Screening Freq → Qualified | 0.328 | < 0.001 | 220 | Significant |

| Test 4 | Spend → Productivity/FTE | −0.058 | 0.389 | 220 | Null (not significant) |

| Test 5 | PRMI → Revenue Influenced | 0.419 | < 0.001 | 220 | Significant (strongest) |

Table A. All five core tests use Spearman’s rank correlation (ρ), which is robust to the ordinal, banded nature of the survey data. Partial correlations controlling for organizational revenue are computed for Tests 1, 2, and 5; all maintain significance after adjustment.

Interpreting the null result (Test 4)

The absence of a relationship between research spend and productivity is a meaningful finding. It suggests that the determinants of research output per FTE are more complex than budget alone. Factors such as staff skill level, tool utilization, organizational workflow, and the nature of research requests likely matter more than the dollar amount invested. For practitioners, this finding argues against framing budget justification as a simple “more money = more output” proposition and instead supports emphasizing the quality and integration of research practices.

Partial correlations

| Relationship | Control Variable | Partial ρ | p-value | n |

| PRMI → Assignment Rate | Org Revenue | 0.287 | < 0.001 | 220 |

| PRMI → Inactive Prospect % | Org Revenue | −0.132 | 0.051 | 220 |

| PRMI → Revenue Influenced | Org Revenue | 0.278 | < 0.001 | 220 |

Table B. Partial Spearman correlations controlling for organizational revenue (midpoint). The PRMI–revenue-influenced association attenuates from ρ = 0.419 to ρ = 0.278 but remains highly significant, suggesting that maturity’s relationship with revenue influence is not merely a proxy for organizational size.

Glossary

Organizational profile

Organization Type—The institutional category of the respondent’s employer. Options in this survey: Higher Education, Healthcare, Independent Nonprofit, Community Foundation, or Other.

Country—The nation where the respondent’s organization is headquartered: United States, Canada, or Other.

Total Organizational Revenue—The organization’s total annual revenue (or operating budget) from all sources, not limited to philanthropy. Collected in bands: Under $1M, $1M–$4.9M, $5M–$19.9M, $20M–$99.9M, $100M+.

Major Gift Revenue—The total dollar amount raised through major gifts specifically (as defined by the organization’s own major gift threshold) in the most recent fiscal year. Collected in bands: Under $250k, $250k–$999k, $1M–$4.9M, $5M–$9.9M, $10M+.

Total Advancement Staff FTE—The full-time equivalent headcount of the entire advancement or development department, including frontline fundraisers, research staff, operations, stewardship, annual giving, and leadership. Bands: 1–5, 6–15, 16–40, 41–100, 101+.

Major Gift Officer (MGO) FTE—The number of full-time equivalent frontline fundraisers whose primary responsibility is managing a portfolio of major gift prospects through cultivation, solicitation, and stewardship. Bands: 1–2, 3–5, 6–10, 11–20, 21+.

Research staffing & investment

Has Dedicated Research Staff—Whether the organization employs at least one person (at any FTE level) whose primary role is prospect research or prospect development. Yes or No.

Research FTE—The full-time equivalent headcount dedicated to prospect research and/or prospect development functions. A part-time researcher working half-time would be 0.5 FTE; two full-time researchers would be 2.0 FTE. Bands: <0.5, 0.5–0.99, 1–1.99, 2–3.99, 4–5.99, 6–8.99, 9–12.99, 13–15.99, 16+.

Research Model—How an organization structures its prospect research function. Options: Fully in-house (all research done by internal staff), Mostly in-house + external (primarily internal with some outsourced work), Mostly outsourced (primarily contracted to external vendors), Distributed/no central (research responsibilities spread across multiple roles with no dedicated research unit).

Annual Research Spend—Total annual budget allocated to prospect research operations, including staff salaries, benefits, and overhead—but excluding tool/platform subscriptions, which are captured separately. Bands: Under $25k, $25k–$74.9k, $75k–$149.9k, $150k–$299.9k, $300k+.

Annual Tool Spend—Total annual spending on prospect research platforms, databases, screening services, and technology subscriptions (e.g., iWave, WealthEngine, DonorSearch, ResearchPoint, LexisNexis). Excludes CRM costs. Bands: Under $10k, $10k–$24.9k, $25k–$49.9k, $50k–$99.9k, $100k+.

Research volume & efficiency

Prospects Researched per Year—The total number of individual prospects on whom the research team completed any level of research (profile, brief, screening review, etc.) in the most recent fiscal year. Bands: <100, 100–249, 250–499, 500–999, 1,000–2,499, 2,500+.

Prospects Qualified per Year—The number of researched prospects who were formally determined to meet the organization’s qualification criteria and moved into the active pipeline for assignment to an MGO. Bands: <50, 50–99, 100–199, 200–399, 400–799, 800+.

Effort per Qualified Prospect—The average number of staff-hours spent to research and qualify a single prospect, from initial identification through the qualification decision. Bands: <30 min, 30–59 min, 1–2 hrs, 2–4 hrs, 4–8 hrs, 8+ hrs.

Time to Assign—The elapsed calendar time between when a prospect is qualified by the research team and when that prospect is formally assigned to an MGO’s portfolio. Bands: <2 weeks, 2–4 weeks, 1–2 months, 3–5 months, 6+ months, Not tracked. Longer times may indicate bottlenecks in handoff processes or capacity constraints among MGOs.

Batch Screening Frequency—How often the organization runs its full constituent database (or a large segment of it) through a third-party wealth/philanthropic screening service to refresh capacity ratings, identify new prospects, and flag changes. Options: Continuous/automated, Quarterly, Semi-annually, Annually, Ad hoc, Never.

Lookup Frequency—How often research staff perform individual prospect lookups (as opposed to batch screening)—real-time research triggered by a specific request from an MGO, event, or workflow. Options: Daily, Weekly, Monthly, Rarely, Never.

Qualification criteria

Qualification Criteria—The specific standards an organization uses to determine whether a prospect is ready for assignment to an MGO. The survey asked respondents to select all that apply from four options:

Capacity Threshold—The prospect’s estimated giving capacity meets or exceeds the organization’s major gift threshold.

Affinity Confirmed—Evidence exists that the prospect has a meaningful connection to the organization (alumni status, event attendance, board service, family ties, etc.).

Engagement Activity—The prospect has demonstrated recent engagement (gift history, event attendance, volunteer participation, website activity, etc.).

Committee Approval—A formal committee or review process has approved the prospect for assignment.

Qualification Criteria Count—The number of the four criteria above that an organization uses (0–4, plus “other”). Organizations using more criteria have more structured qualification processes, though the count is not significantly correlated with revenue or PRMI in this dataset.

Pipeline & portfolio

Portfolio Size—The total number of prospects actively managed across all MGO portfolios in the organization. This is the denominator for the percentage-based pipeline metrics below. Bands: <50, 50–99, 100–149, 150–199, 200+.

Assignment Rate (Pct Assigned)—The percentage of the active prospect portfolio currently assigned to a specific MGO for relationship management. A 37% assignment rate on a 125-person portfolio means roughly 46 prospects have a named MGO responsible for them. Bands: <25%, 25–49%, 50–69%, 70–84%, 85%+.

Pct Not Assigned—The complement: the percentage of the portfolio without an assigned MGO. These prospects are identified and may be qualified, but are not in active cultivation.

Pct Solicited—The percentage of the total portfolio that received a formal solicitation (ask) during the most recent fiscal year. This is a percentage of the full portfolio, not of the assigned subset. Bands: <10%, 10–24%, 25–39%, 40–59%, 60%+, Not tracked. The “Not tracked” responses (n = 56 of 220) are a significant finding in themselves.

Pct Closed—The percentage of the total portfolio where at least one gift closed during the most recent fiscal year. Again, this is a percentage of the full portfolio. Bands: <5%, 5–9%, 10–19%, 20–34%, 35%+, Not tracked.

Major Gift Threshold—The minimum dollar amount at which the organization classifies a gift as a “major gift.” This varies widely by institution—a $1,000 gift is major at a small community nonprofit; a $100,000 gift may be the floor at a large university. Bands: $1k–$4.9k, $5k–$9.9k, $10k–$24.9k, $25k–$99.9k, $100k+.

Inactive Prospect Rate—The estimated percentage of the portfolio that is effectively inactive—prospects who are unlikely to give, have become unresponsive, or should have been removed but remain on the books. High inactive prospect rates inflate portfolio metrics and mask true pipeline health. Bands: <10%, 10–24%, 25–39%, 40–59%, 60%+.

Outcome & impact measures

Revenue Influenced by Research—The total dollar amount of philanthropic revenue that the respondent attributes to prospects who were researched by the prospect development team. This is a self-reported estimate and subject to attribution methodology differences across organizations. Bands: <$100k, $100k–$499k, $500k–$999k, $1M–$4.9M, $5M–$9.9M, $10M+.

Pct Revenue from Researched Prospects—The percentage of total philanthropic revenue that came from prospects who had been formally researched. This is a measure of research coverage and influence on the revenue base. Bands: <25%, 25–49%, 50–69%, 70–84%, 85%+, Not tracked.

Close Rate Comparison—Whether prospects who were formally researched close at a higher, similar, or lower rate than prospects who were not researched. Self-reported perception. Options: Close significantly higher, Close slightly higher, Close at similar rates, Close lower, Not enough data.

Gift Size Comparison—Whether gifts from researched prospects tend to be larger, similar, or smaller than gifts from non-researched prospects. Options: Give significantly larger gifts, Give slightly larger gifts, Give similar gift sizes, Give smaller gifts, Not enough data.

Elasticity—Whether the respondent perceives that researched prospects increase their giving over time (i.e., whether research-informed cultivation produces donor “stretch”). Options: <5% increase, 5–10% increase, 10–20% increase, 20%+ increase, No change, Not sure.

PRMI—Prospect Research Maturity Index †

PRMI—A composite index created for this study to measure how operationally mature a prospect research function is, independent of its size or budget. It is built from five Likert-scaled items (each scored 1–5), summed to a raw score of 5–25, then normalized to a 0–100 scale.

The five items:

- CRM Logging Discipline (prmi_1)—”How consistently does your team log research activity and findings in the CRM?” Measures whether research outputs are documented systematically or exist only in files, emails, or individual memory.

- Go/No-Go Action on Research (prmi_2)—”When research is delivered, how consistently do gift officers take action on it?” Measures whether research is used or ignored—a proxy for the research-to-fundraising feedback loop.

- Solicitation Tracking (prmi_3)—”How well does your organization track which prospects have been solicited and the outcome?” Measures whether the pipeline is visible from end to end or whether solicitation activity is a black box.

- Portfolio Hygiene (prmi_4)—”How regularly does your team review and clean the prospect portfolio?” Measures whether inactive prospects are removed, assignments are updated, and the portfolio reflects reality.

- Demonstrate ROI (prmi_5)—”How confident is your team in demonstrating the return on investment of prospect research?” Measures whether the research function can articulate its value in terms leadership understands.

PRMI Quartile Tiers:

- Emerging (0–25): Minimal formal processes; research is ad hoc or reactive.

- Developing (26–50): Some processes in place but inconsistently followed; data quality is uneven.

- Advanced (51–75): Consistent processes; CRM is reasonably well-maintained; research is integrated into fundraising workflows.

- Leading (76–100): Systematic, data-driven operations; strong feedback loops; can demonstrate ROI.

- Cronbach’s α = 0.733—This is the internal consistency reliability coefficient for the five PRMI items. Values above 0.70 are generally considered acceptable, meaning the five items hang together as a coherent measure of a single underlying construct.

Derived metrics (Calculated, not directly surveyed)

Prospects per FTE—Prospects researched (midpoint) ÷ research FTE (midpoint). Measures raw research throughput per full-time equivalent researcher. Example: 375 prospects researched by 3.0 FTE = 125 prospects/FTE.

Qualified per FTE—Prospects qualified (midpoint) ÷ research FTE (midpoint). Measures the output of the qualification process per researcher. This is a tighter measure of productivity than prospects/FTE because it captures only the prospects that cleared the qualification bar.

Cost per Qualified Prospect—Annual research spend (midpoint) ÷ prospects qualified (midpoint). An efficiency metric expressing what it costs, in research budget dollars, to produce one qualified prospect ready for assignment. The median in the study is approximately $500.

Midpoint Band Modeling †—Because the survey collected most numeric responses in ranges (e.g., “$20M–$99.9M”), we convert each band to its midpoint for analysis. “$20M–$99.9M” becomes $59,950,000. This allows computation of medians, correlations, and derived ratios, with the caveat that all resulting figures are estimates—the true value for any given respondent could fall anywhere within their reported band.

Statistical concepts used in the report

Spearman Rank Correlation (ρ)—A non-parametric measure of the strength and direction of the monotonic relationship between two variables. Used throughout this study because the data is ordinal (band-based) rather than continuous. ρ = +1.0 means a perfect positive rank-order relationship; ρ = −1.0 means a perfect inverse relationship; ρ = 0 means no rank-order association. All reported correlations describe association, not causation.

p-value—The probability of observing a correlation as strong as (or stronger than) the one found, if the true correlation were zero. p < 0.001 means there is less than a 0.1% chance the result is due to random noise. The study uses three significance thresholds: * p < 0.05, ** p < 0.01, *** p < 0.001.Median—The middle value when all responses are sorted. Used instead of mean (average) throughout the study because ordinal band data and revenue figures are heavily right-skewed—a few very large organizations would distort an average, but not a median.

About Kindsight

Kindsight builds technology that helps fundraisers make a difference. Founded on over three decades of innovation, and trusted by over 4,500 organizations worldwide, Kindsight is the market leader in advancement and fundraising software, supporting the education, healthcare, and nonprofit sectors to achieve their goals through smarter, more connected fundraising. Natively built upon the Salesforce architecture, Kindsight’s Fundraising Platform is anchored in the strength and flexibility of the Ascend CRM, powered by the trusted insights of iWave data, and unified by seamless workflows and connected data. Kindsight helps organizations discover the right donors, inspire personal connections at scale, and grow giving year after year. With industry-leading prospect research solutions, award-winning fundraising CRMs, a dynamic constituent portal, and an AI assistant built for modern fundraising, Kindsight’s product suite is truly changing the game for donor fundraising. Connect your story to donors who care about your cause—at any scale, in real time—that’s the power of Kindsight. Learn more at kindsight.io

About Apra International

Apra International is proud to serve as the practitioner advisor for Kindsight’s 2026 Prospect Development Benchmark Report. As the professional home for data-driven fundraising professionals, Apra advances the field by providing accessible learning and career pathways to upskill and lead in a technology-enabled, ethical, and rapidly evolving philanthropic landscape. We foster an inclusive, connected and collaborative global community while leading the development and dissemination of best practices, insights, and emerging trends. Learn more at aprahome.org.